Generalization power of the estimator (you can read more in this Kaggle Using the whole dataset, some information about the test sets areĪvailable to the train sets. You would be breaking the fundamental assumption of independence between Without using a pipeline, and then perform any kind of cross-validation, Reasons is that if you apply a pre-processing step to the whole dataset In practice, you almost always want to search over a pipeline, instead of a single estimator. > # define the parameter space that will be searched over > param_distributions = > # the search object now acts like a normal random forest estimator > # with max_depth=9 and n_estimators=4 > search. > X, y = fetch_california_housing ( return_X_y = True ) > X_train, X_test, y_train, y_test = train_test_split ( X, y, random_state = 0 ). > from sklearn.datasets import fetch_california_housing > from sklearn.ensemble import RandomForestRegressor > from sklearn.model_selection import RandomizedSearchCV > from sklearn.model_selection import train_test_split > from scipy.stats import randint.

Is over, the RandomizedSearchCV behaves asĪ RandomForestRegressor that has been fitted with

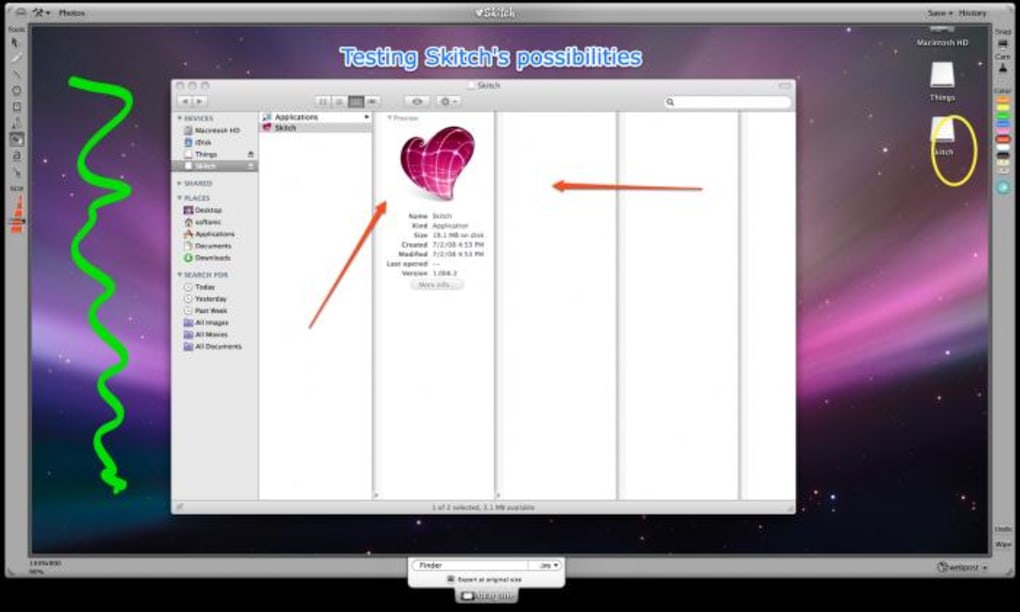

Search over the parameter space of a random forest with a Scikit-learn provides tools to automatically find the best parameterĬombinations (via cross-validation). Should be since they depend on the data at hand. Quite often, it is not clear what the exact values of these parameters Max_depth parameter that determines the maximum depth of each tree. Parameter that determines the number of trees in the forest, and a Often critically depends on a few parameters. > result = cross_validate ( lr, X, y ) # defaults to 5-fold CV > result # r_squared score is high because dataset is easy array() Automatic parameter searches ¶Īll estimators have parameters (often called hyper-parameters in the > X, y = make_regression ( n_samples = 1000, random_state = 0 ) > lr = LinearRegression (). > from sklearn.datasets import make_regression > from sklearn.linear_model import LinearRegression > from sklearn.model_selection import cross_validate. It is also possible to manually iterate over the folds, use differentĭata splitting strategies, and use custom scoring functions. We here briefly show how to perform a 5-fold cross-validation procedure, Tools for model evaluation, in particular for cross-validation. We have just seen theĭataset into train and test sets, but scikit-learn provides many other Model evaluation ¶įitting a model to some data does not entail that it will predict well on fit ( X_train, y_train ) Pipeline(steps=) > # we can now use it like any other estimator > accuracy_score ( pipe. > # load the iris dataset and split it into train and test sets > X, y = load_iris ( return_X_y = True ) > X_train, X_test, y_train, y_test = train_test_split ( X, y, random_state = 0 ). > # create a pipeline object > pipe = make_pipeline (. > from sklearn.preprocessing import StandardScaler > from sklearn.linear_model import LogisticRegression > from sklearn.pipeline import make_pipeline > from sklearn.datasets import load_iris > from sklearn.model_selection import train_test_split > from trics import accuracy_score. You don’t need to re-train the estimator: Once the estimator is fitted, it can be used for predicting target values of Usually 1d array where the i th entry corresponds to the target of theīoth X and y are usually expected to be numpy arrays or equivalentĪrray-like data types, though some estimators work with other Unsupervized learning tasks, y does not need to be specified.

Integers for classification (or any other discrete set of values). The target values y which are real numbers for regression tasks, or Represented as rows and features are represented as columns. Is typically (n_samples, n_features), which means that samples are The fit method generally accepts 2 inputs: fit ( X, y ) RandomForestClassifier(random_state=0) from sklearn.ensemble import RandomForestClassifier > clf = RandomForestClassifier ( random_state = 0 ) > X =, # 2 samples, 3 features.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed